Keywords: Affective, Shape-change, Wearable skin, Touch

Exploring people’ emotional states when they interact with a shape-changing skin

Publish as CHI 2022 proceedings. Click to see the preprint paper.

Research story

In nature, information is conveyed through not only language but a variety of channels such as postures, shapes, ultrasonics, and swarm formations (of ants, birds, bees etc.). I’m particularly interested in the modality of shape-change.

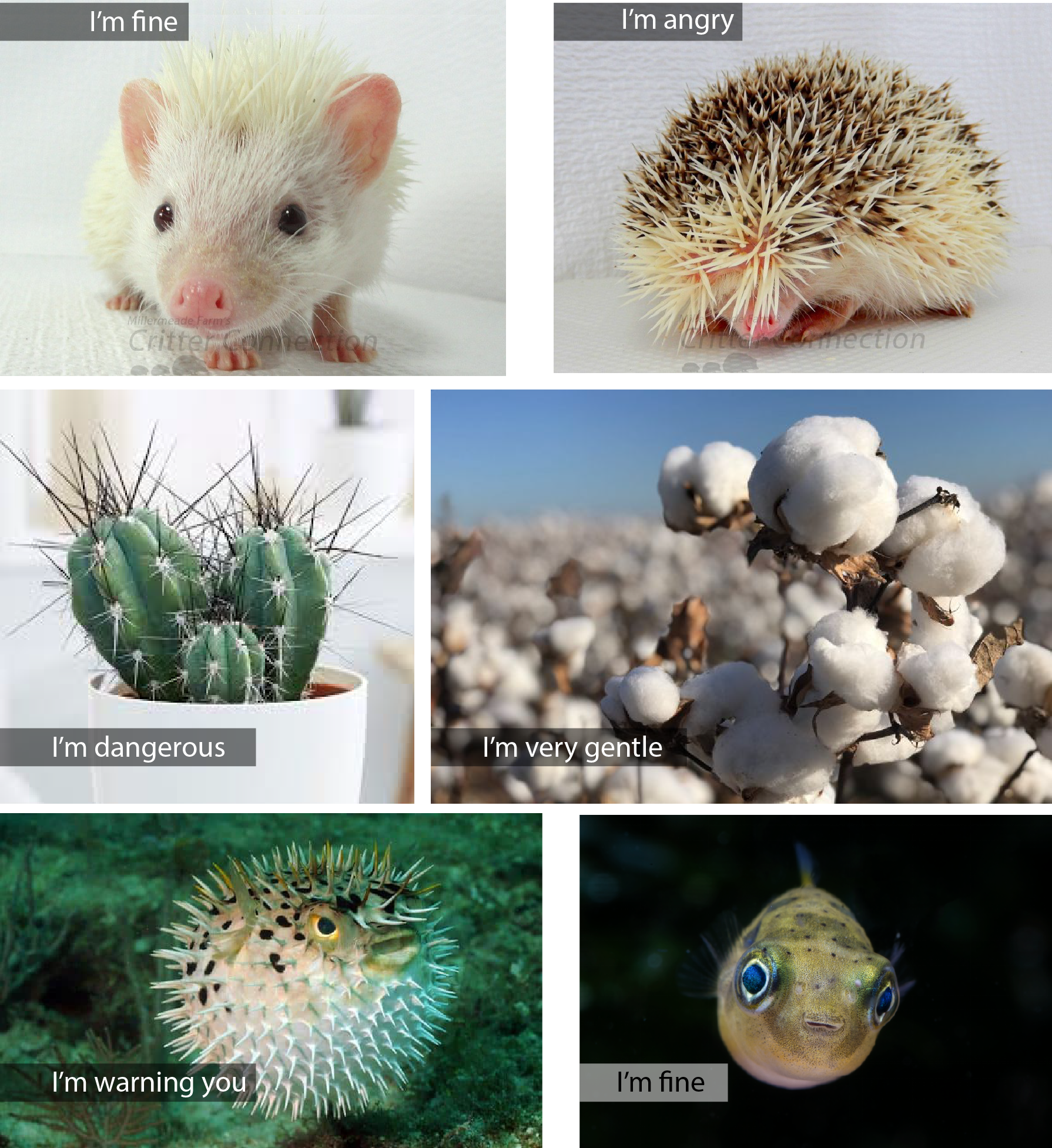

Look at following pics. The shapes convey very clear messages that can be perceived by people and raise certain affective states.

(The hedgehocs’ pic are adopted from critterconnection

The question

What if an object or a robot has a skin which conveys information through shape-change mechanism? How would human understand the affective messages by perceiving the change of the skin?

This project aims to understand how human understand affective states which are expressed through shape-change skin.

As a first step, we control the shape-change by three parameters: change of the size, speed and the frequency of the change.

Motivation

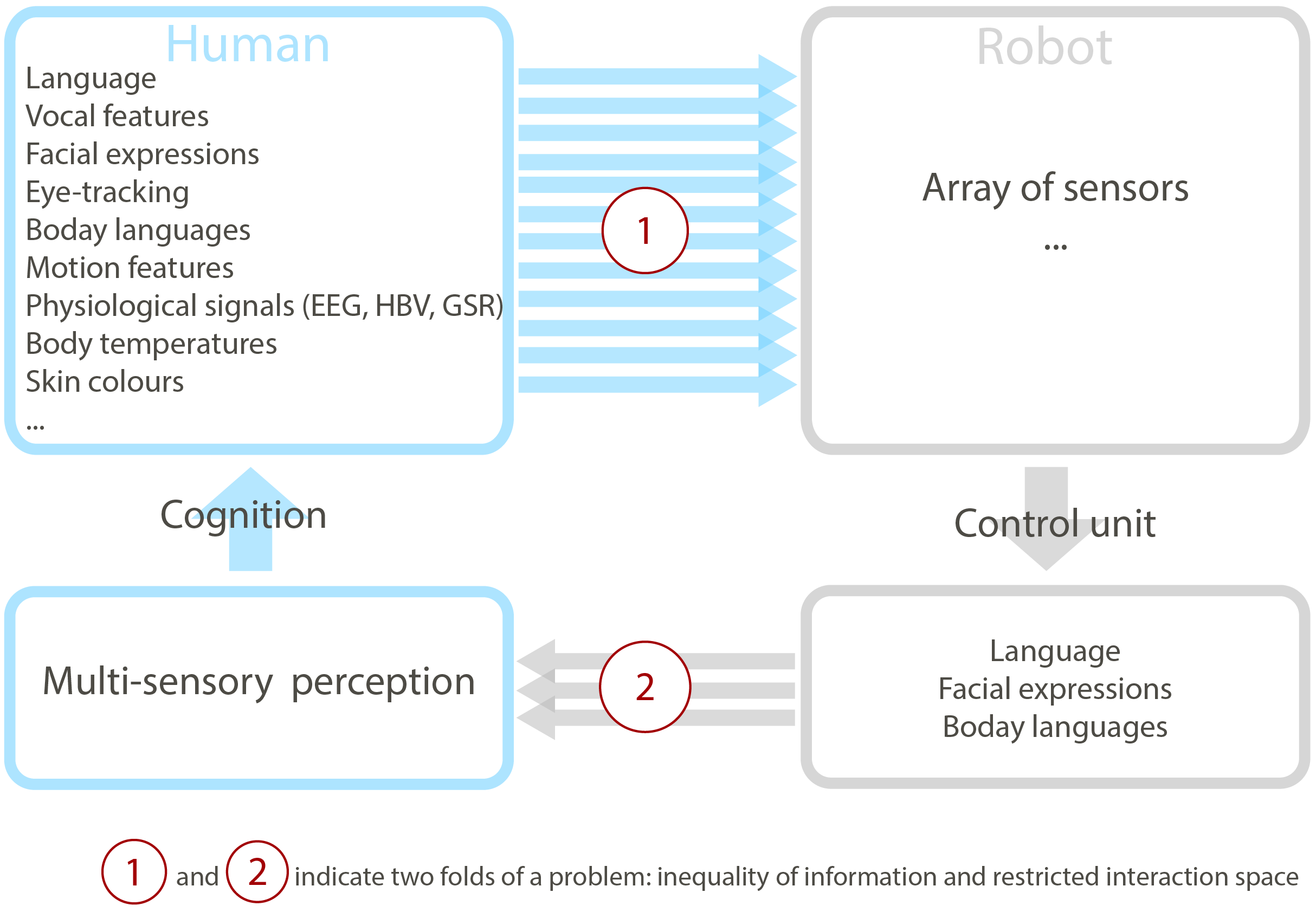

Nowadays we have algorithms, intelligent agents or robots that are able to touch human’s emotions by reading human’s facial expressions, physiological signals, body language etc. However, there are limited communication channels for the robots to convey their rich underlying states. This problem has two aspects. On the one hand, humans become increasingly transparent to the robots, while robots are increasingly opaque to humans, which leads to information inequality. On the other hand, inadequate expressive channels limit interaction space between human and robots, and diminish good user experience (UX) in human-robot/computer interaction (as shown in figure below).